In this post, I’ll implement a simple Deep Learning model to predict optimal task distribution across an hypothetical (and intentionally simplified) supply chain scenario. We’ll implement the model, load the data, train and evaluate it.

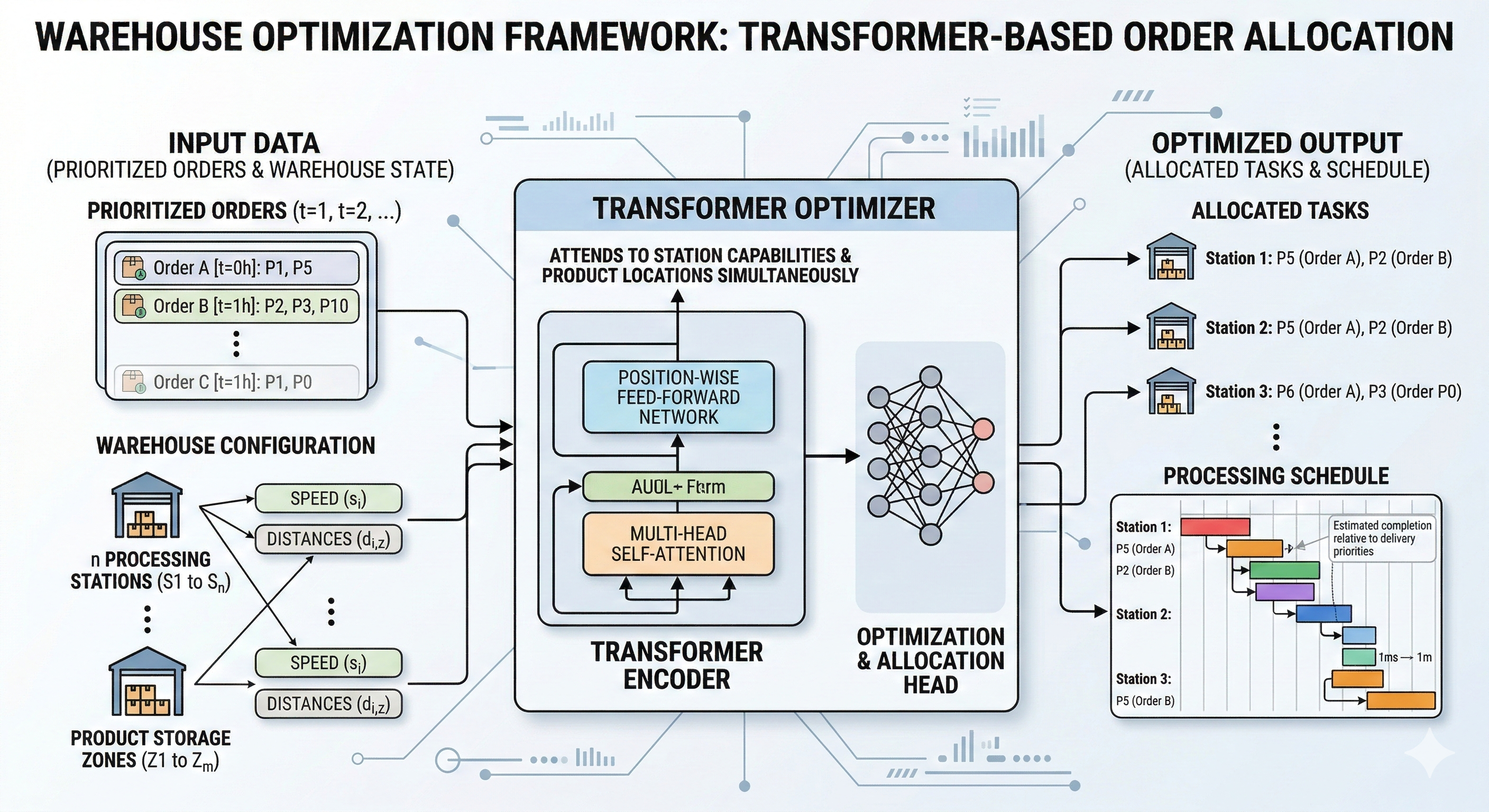

The scenario: Imagine we have a warehouse, where there are n stations capable of processing products. Each station has a distinct distance d from each product storage, and a speed s for processing each product. We need to process orders, each one consisting of a list of products. The orders are previously prioritized by the time they need to be delivered.

Motivation: We could encode the rules for this task using classical computing techniques (conditions and loops), and not have to prepare and load a lot of data, but that approach wouldn’t give us flexibility to deal with different warehouses, or changing conditions that a very dynamic environment would present us with. Using Machine Learning, we will be free from devising detailed rules that can change at any moment, and we’d be easily able to feed the model with real-time data, allowing it to learn new patterns during operation.

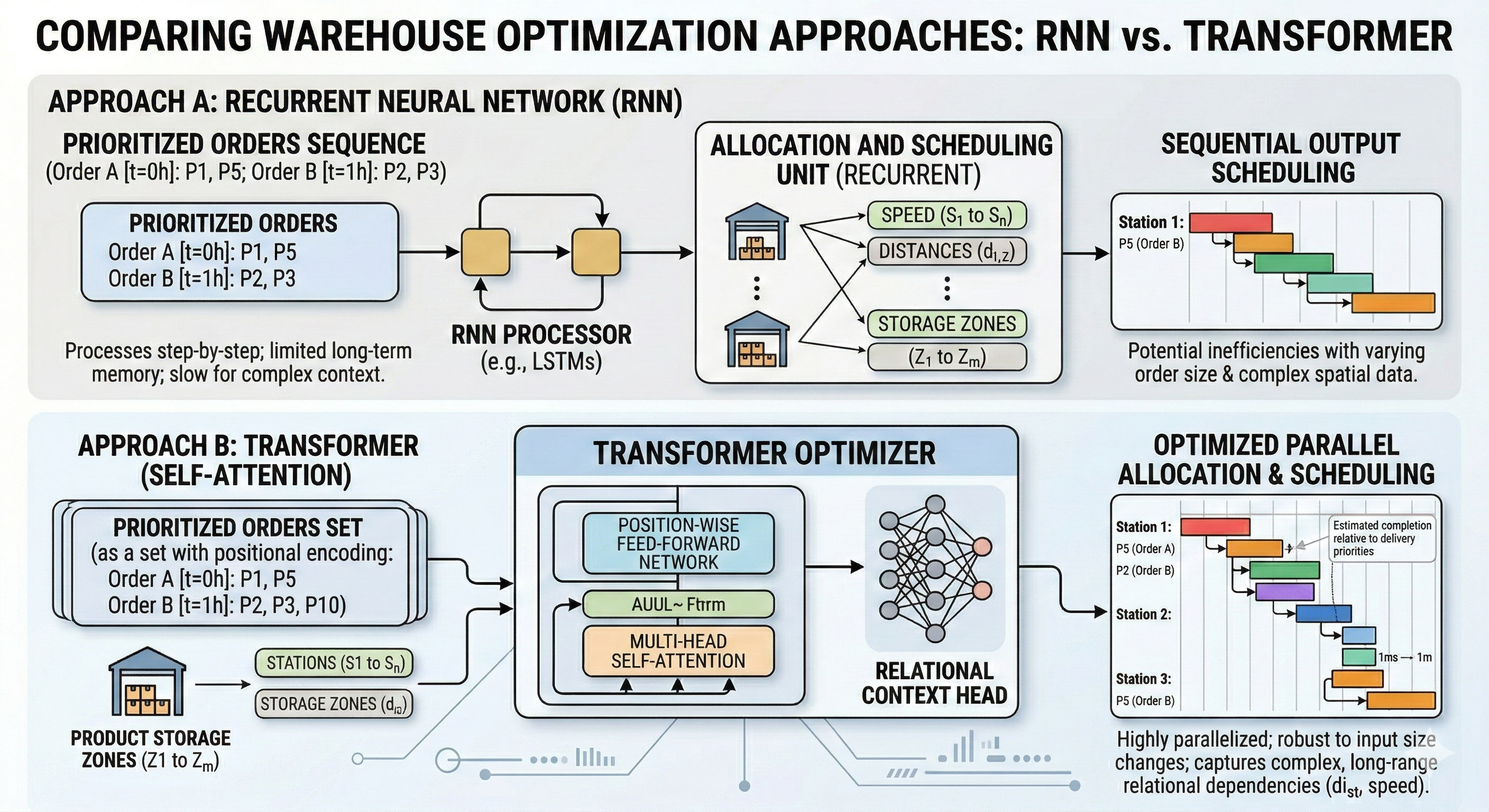

Available options: Since orders can have an arbitrary number of products, we need to create a model capable of dealing with inputs of any size. To achieve this, we could use Recurrent Neural Networks, or Transformers.

- Recurrent Neural Networks (RNNs): RNNs process data sequentially, one element at a time. They maintain a “hidden state” that acts as a memory of previous inputs. However, they struggle with “vanishing gradients,” making it difficult for them to remember information from the beginning of a long sequence by the time they reach the end.

- Transformers: Unlike RNNs, Transformers use a Self-Attention mechanism to process the entire input sequence simultaneously (parallelization). This allows the model to weigh the importance of every product in an order relative to every other product and station, regardless of their position in the list.

Why Transformers are the Ideal Solution

In our warehouse scenario, the “sequence” isn’t necessarily chronological—it’s a complex relational set. Transformers are superior here for three specific reasons:

- Global Context: A Transformer can simultaneously compare a product’s storage distance (d) and a station’s speed (s) across the entire order. It doesn’t “forget” the first product by the time it analyzes the tenth.

- Order Prioritization: Since our orders are pre-prioritized by delivery time, Transformers can use Positional Encodings to maintain this hierarchy while still calculating the most efficient physical path for the picker.

- Scalability: Because they don’t process data step-by-step, Transformers are significantly faster to train and more adept at handling massive “rush hour” spikes where the number of products (n) per order varies wildly.

The model:

Model’s input will be a list of orders, each consisting of a list products, that will be just a list of integers, each one representing the indexes of product in a list of products:

my_input = torch.tensor([[0, 1, 2, 3], [4, 3, 2, 0]])The output is a list of the indexes of the stations each product should be assigned to:

model(my_input)

> [[3, 4, 4, 3], [3, 0, 4, 3]]To achieve that, we need first to define the layers of the model:

First we define the embeddings, it is like a giant sticker book. Every item in your supply chain gets its own special sticker (a long list of numbers) that describes what it is.

self.embedding = nn.Embedding(vocab_size, self.d_model, padding_idx=pad_idx)Next, we define a positional encoder, it is like a calendar. It tells the brain when things happened. If a “truck” arrives on Monday, it’s different than a “truck” arriving on Friday. (The 5k is the number of items we’re able to handle in a single order).

self.pos_encoder = nn.Parameter(torch.randn(1, 5000, self.d_model))Then we define a transformer encoder. Think of it as a super-powered Reading Club for robots.

encoder_layer = nn.TransformerEncoderLayer(d_model=self.d_model, nhead=8, batch_first=True)

self.transformer_encoder = nn.TransformerEncoder(encoder_layer, num_layers=4)Imagine you give a group of friends a long sentence to read. In the old days, robots had to read one word at a time, like walking down a dark hallway with a tiny flashlight. They would often forget the beginning of the sentence by the time they got to the end! The Transformer Encoder is different. It looks at the whole page.

The Encoder has many “heads” (usually 8, like an octopus).

- One head might focus on who is doing the action.

- Another head might focus on where they are.

- Another head looks for colors or sizes. By looking at the sentence 8 different ways at once, it understands the story much better than a human could!

We have num_layers=4. This means the sentence goes through 4 different clubs in a row.

- Club 1 figures out the easy stuff (like which words are nouns).

- Club 2, 3, and 4 figure out the complicated stuff (like the “vibe” of the sentence or the secret meaning behind the words).

Finally, we have a linear layer that makes our output assume the expected form, that is: a list containing the probabilities of each station being the ideal one, something like that:

> [0.25, 0.5, 0.125, 0.124, 0.001]To find out what’s the best station for a product to be processed by, we just take the index of the highest probability (1, in this case).

The complete model:

class SupplyChainModel(nn.Module):

def __init__(self, vocab_size, pad_idx=0):

super().__init__()

self.pad_idx = pad_idx

self.d_model = 256

self.embedding = nn.Embedding(vocab_size, self.d_model, padding_idx=pad_idx)

self.pos_encoder = nn.Parameter(torch.randn(1, 5000, self.d_model))

encoder_layer = nn.TransformerEncoderLayer(d_model=self.d_model, nhead=8, batch_first=True)

self.transformer_encoder = nn.TransformerEncoder(encoder_layer, num_layers=4)

self.out = nn.Linear(self.d_model, vocab_size)

def forward(self, x):

# Create mask: True where x is the padding index

mask = (x == self.pad_idx)

x_emb = self.embedding(x) + self.pos_encoder[:, :x.size(1), :]

# Pass the mask to the transformer

x_enc = self.transformer_encoder(x_emb, src_key_padding_mask=mask)

return self.out(x_enc)With all that, we can make a simple function to run the inference:

def predict(model, input_list):

# 1. Put model in evaluation mode (turns off Dropout, etc.)

model.eval()

with torch.no_grad():

# 2. Forward Pass (Get raw scores)

logits = model(input_list) # Shape: [1, Seq_Len, Vocab_Size]

# 3. Get the most likely token ID for each position

predictions = torch.argmax(logits, dim=-1) # Shape: [1, Seq_Len]

# 4. Return as a simple Python list

return predictionsAnd a training loop:

for epoch in range(epochs):

total_loss = 0

model.train()

for sequence, result in loader:

optimizer.zero_grad()

# Forward pass

logits = model(sequence)

# Flatten for CrossEntropy: (Batch * Seq, Vocab) vs (Batch * Seq)

loss = criterion(logits.view(-1, vocab_size), result.view(-1))

loss.backward()

optimizer.step()

total_loss += loss.item()

if (epoch + 1) % 10 == 0:

print(f"Epoch {epoch+1} | Loss: {total_loss/len(loader):.4f}")Running everything together, we have this:

Input: [[0, 1, 2, 3], [4, 3, 2, 0]]

Result: [[3, 4, 4, 3], [3, 0, 4, 3]]

Epoch 10 | Loss: 0.0075

Epoch 20 | Loss: 0.0020

Epoch 30 | Loss: 0.0007

Epoch 40 | Loss: 0.0004

Epoch 50 | Loss: 0.0004

Training Complete!

Result after training: [[4, 3, 2, 1], [1, 2, 3, 1]]Conclusion: why use such specific model?

While Large Language Models (LLMs) are amazing at writing poems or coding, they are often overkill for specific industrial tasks. This custom Transformer architecture is the better tool for the job because an LLM has billions of parameters, which costs a fortune to run. Our model is compact (with a d_model of 256 and only 4 layers). It also is streamlined, it can process thousands of shipping data points in milliseconds, it’s orders of magnitude faster. It’s the difference between waiting for a long email reply and getting an instant text message. Finally, we’re using a model that wasn’t trained to translate French or make cat images, making the accuracy skyrocket.